We are officially past the honeymoon phase of generative AI. Just a few years ago, marketing teams were sprinting to implement AI for faster content production and hyper-personalized campaigns. Today, as we navigate 2026, the conversation has violently shifted from “What can this technology do?” to “How much risk are we taking by using it?”

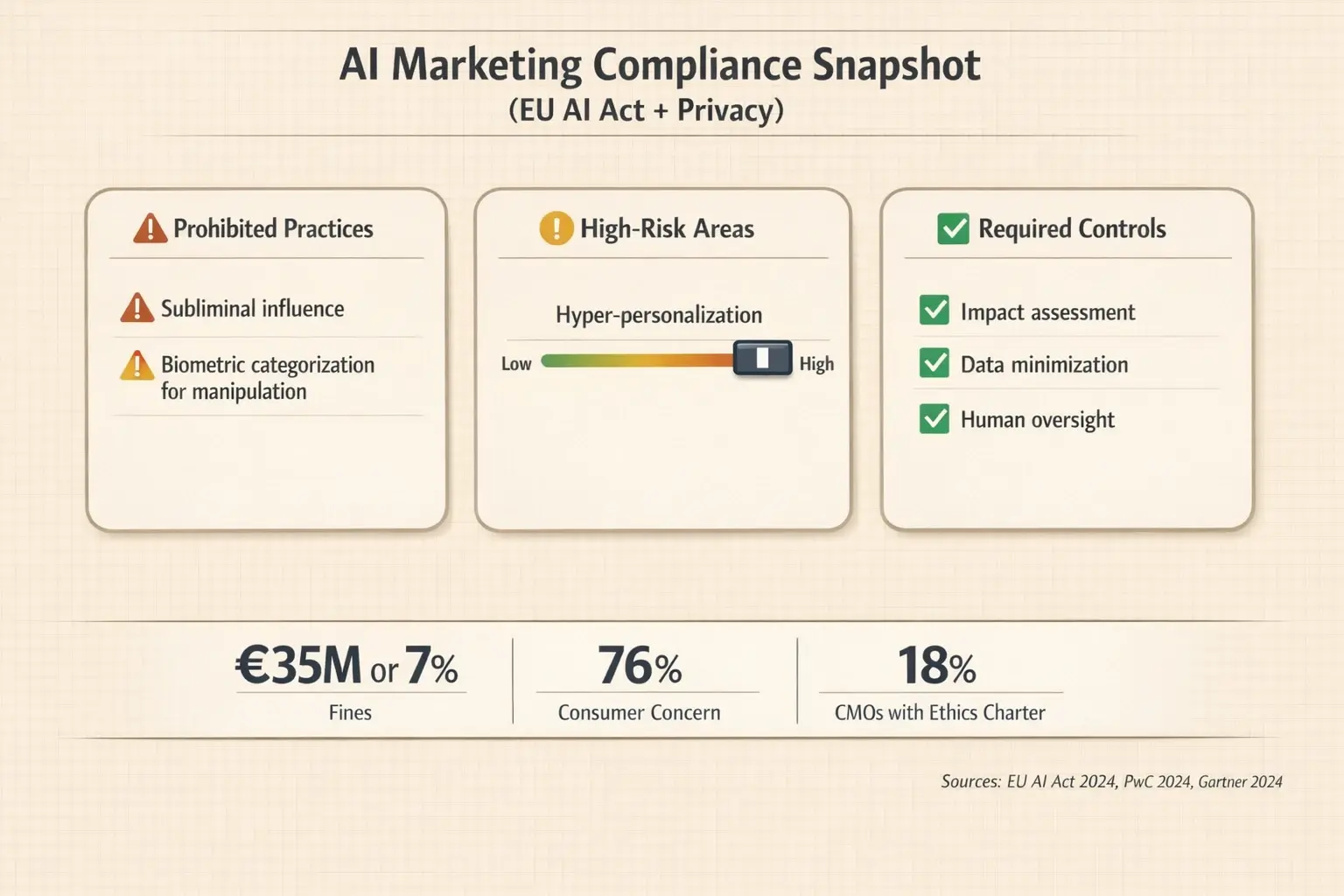

If you’re currently evaluating AI tools for your marketing stack, you already know the stakes have never been higher. According to recent Gartner data, while 65% of marketing teams actively use Generative AI, a staggering 18% of CMOs actually have a formalized AI Ethics Charter in place. That disconnect is a massive liability. With 76% of consumers now expressing deep concern over how AI-driven marketing utilizes their data, adopting AI without a governance framework isn’t just an ethical blind spot—it’s a direct threat to your brand equity and bottom line.

Let’s cut through the theoretical noise and look at exactly how leading marketing teams are currently evaluating AI risks, mitigating algorithmic bias, and building governance frameworks that protect their brands without sacrificing innovation.

The New Regulatory Baseline: From Best Practices to Boardroom Liability

The days of self-policing AI ethics ended when the EU AI Act went into effect in 2024. Today, those regulations act as the global baseline for AI compliance, heavily influencing US executive orders and local privacy laws. Marketers must understand that the AI Act isn’t just for software engineers; it explicitly targets marketing practices.

Under the current framework, AI systems that utilize “subliminal techniques” or “biometric categorization” for manipulative marketing are strictly prohibited. We aren’t talking about a slap on the wrist. Fines for deploying prohibited AI practices can reach €35 million or 7% of a company’s global turnover.

When you evaluate new marketing automation platforms, you can no longer just look at feature sets. You must run a Compliance and Risk Check. Does the vendor offer transparency into their training data? Can they provide a Privacy Impact Assessment (PIA)? If a tool operates as a “black box,” it is effectively a legal landmine for your organization.

Navigating the Gray Area: The Manipulation vs. Persuasion Spectrum

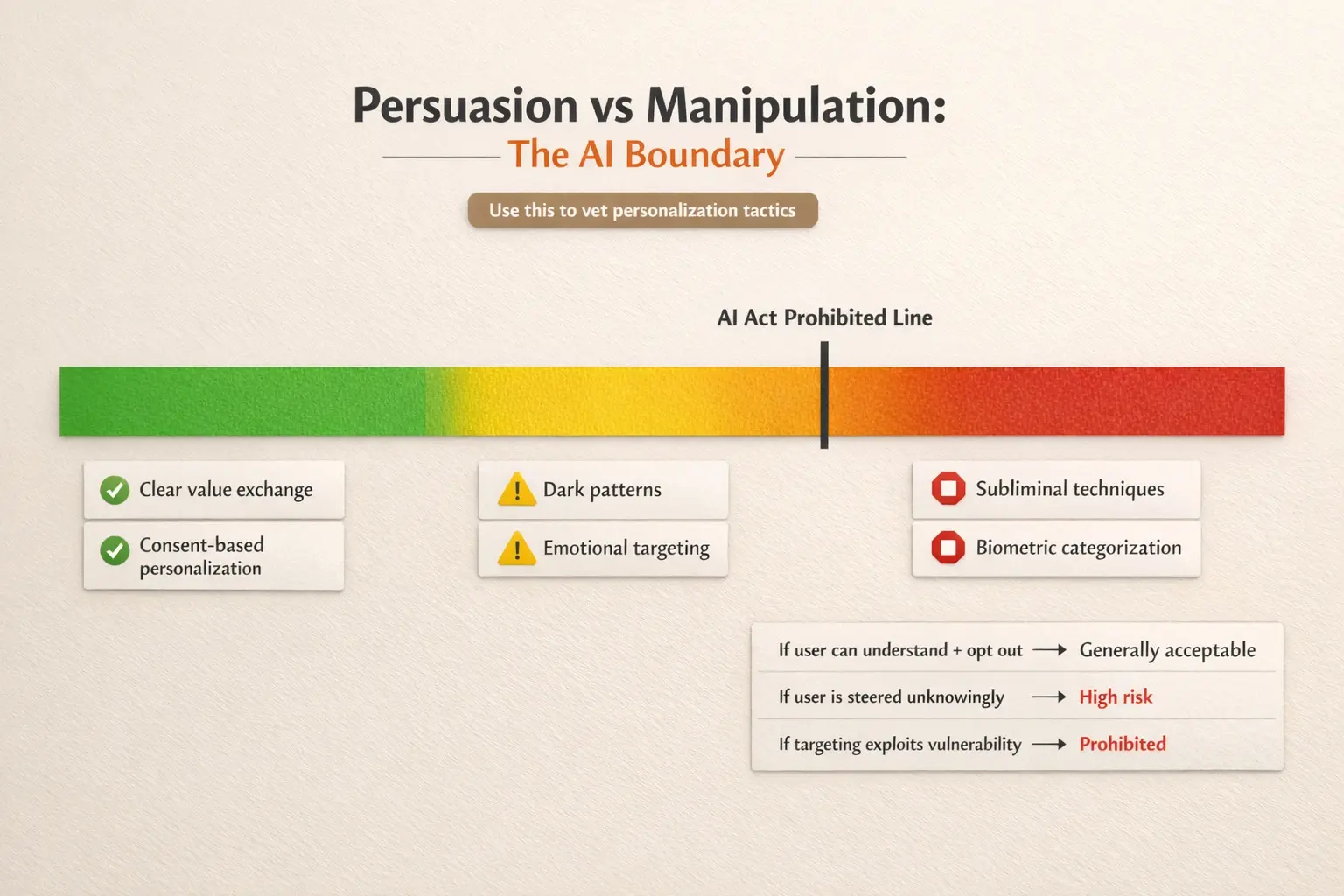

Marketing has always been about persuasion. But AI introduces a scale and precision that easily crosses the line into manipulation. The hidden intent for many marketers evaluating AI tools today revolves around understanding this exact boundary: How far can we push hyper-personalization before it becomes illegal?

- Ethical Nudging: Using AI to analyze past purchase behavior to recommend a genuinely relevant product. The user retains full agency, and the recommendation algorithm is transparent.

- Manipulative Steering: Deploying predictive algorithms that identify users in moments of emotional distress or cognitive vulnerability, then serving them synthetic, hyper-personalized content designed to bypass conscious decision-making.

The “AI-Juul” Deep Dive: A Modern Warning on Predatory Targeting

To understand what prohibited manipulation looks like in practice, consider the historical backlash against Juul’s youth targeting, updated for the AI era. Imagine a modern app using predictive analytics to identify teenagers struggling with anxiety. Instead of static ads, the platform deploys autonomous AI agents to generate synthetic peer influencers who engage these teens on social media, subtly promoting a gambling app or a restrictive diet program.

This isn’t sci-fi—the capability exists today. Teams leveraging aggressive growth hacking social media techniques must audit their algorithms to ensure they aren’t inadvertently stepping into predatory targeting. What looks like “high conversion” on a dashboard might actually be an algorithmic exploitation of a vulnerable demographic.

Detecting and Defeating Algorithmic Bias in Your MarTech Stack

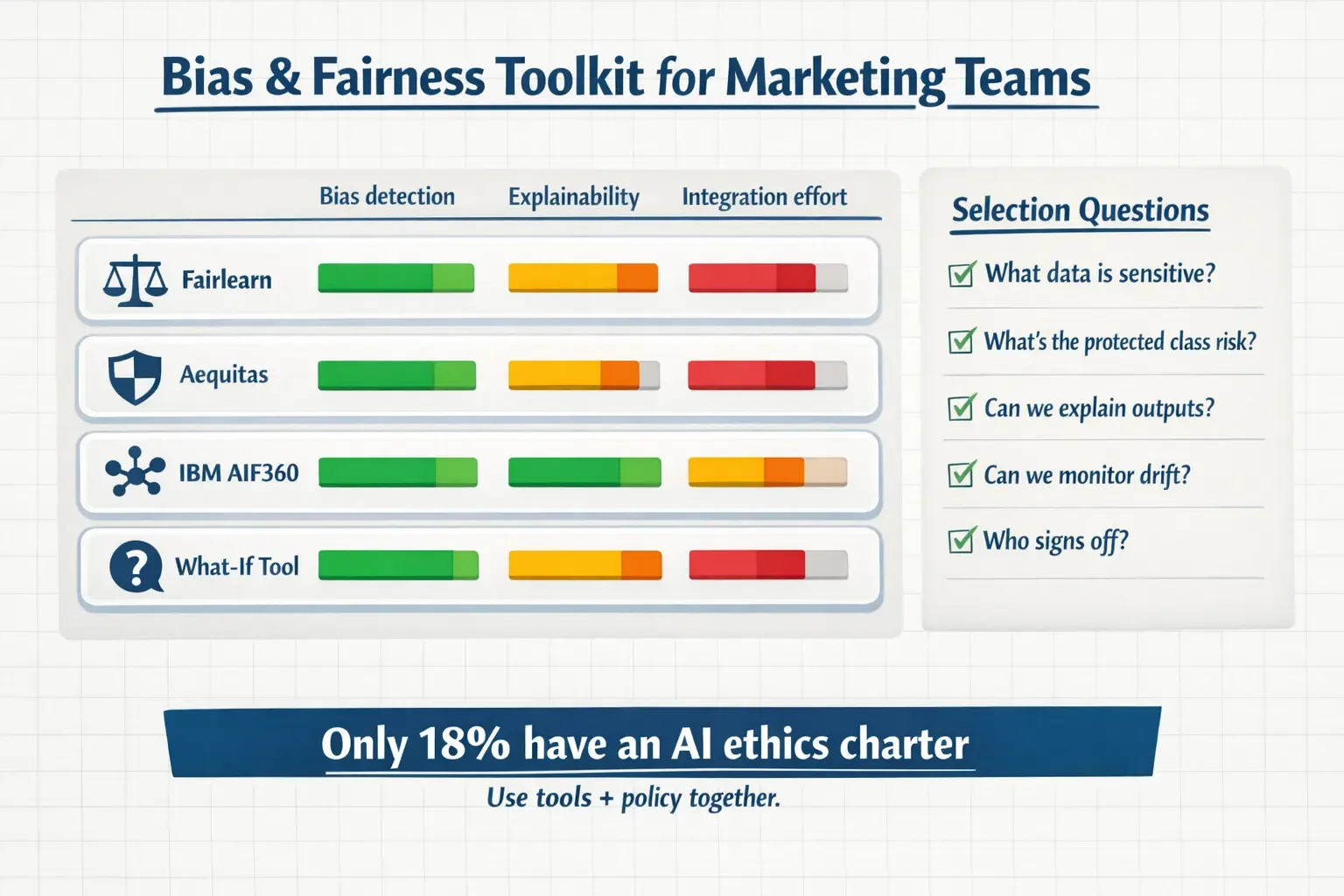

A massive friction point for teams evaluating AI solutions is algorithmic bias. You want to avoid becoming the next catastrophic headline. We’ve seen major tech companies pull recruitment and advertising algorithms because they penalized female candidates or excluded minority neighborhoods from housing ads.

As you assess various growth hacking techniques powered by machine learning, you must actively screen for two primary types of bias:

- Salience Bias: The AI model prioritizes the most frequent data points and ignores the nuances of minority segments. If your AI-generated ad copy only appeals to a narrow demographic because that’s what the historical data reflects, you are alienating potential market share.

- Knowledge Bias: The underlying LLM lacks context about specific cultures, communities, or conditions. A highly publicized example in recent years involved AI skin cancer detection tools that failed on darker skin tones. For teams working alongside healthcare growth hacking agencies, failing to audit AI tools for knowledge bias isn’t just an embarrassment—it’s a massive medical and legal liability.

Future-Proofing for 2026 and Beyond: Speculative Tech is Now Reality

What was considered “speculative tech” just two years ago is actively being pitched to CMOs today. If you are comparing marketing platforms, you need an ethics framework robust enough to handle these emerging categories:

Neuromarketing AI

Vendors are now offering tools that utilize device webcams to read real-time emotional and biometric cues, dynamically adjusting landing page copy or video pacing based on a user’s pupil dilation or micro-expressions. The ethical red line here is massive. Explicit, un-bundled consent is mandatory, and under the EU AI Act, using biometric categorization to infer emotions for manipulation is strictly forbidden.

Deepfake Brand Ambassadors

The cost of rendering photorealistic synthetic humans has plummeted, driving a surge in growth hacking ecommerce strategies where brands use AI avatars instead of human influencers. Transparency is non-negotiable. Leading compliance frameworks now mandate a persistent “Not-Human” or “AI-Generated” disclosure label on all synthetic ambassador content to prevent consumer deception.

Building Your 2026 Marketing AI Ethics Toolkit

Understanding the risks is only half the battle; your team needs practical mitigation tools. Don’t rely solely on your AI vendor’s word that their system is “fair.” Start building an internal toolkit.

Integrating open-source bias detection tools like Fairlearn or Aequitas, or utilizing enterprise solutions like IBM’s AIF360, allows your marketing operations team to audit campaign algorithms independently. You can explore a broader curated list of marketing safety and automation tools over on our swipe resources to find the exact integration fit for your current tech stack.

FAQ: Buyer Objections & Practical Governance

Doesn’t strict AI governance slow down our speed-to-market?In the short term, mapping out a governance policy takes time. But in the long run, it massively accelerates deployment. When your team has a clear “Ethics Checklist,” they don’t have to pause every campaign to wait for a legal review. Governance provides the guardrails that allow your marketers to run fast and safely.

Our legal team handles GDPR. Isn’t that enough to cover our AI usage?No. While data privacy (GDPR, CCPA) focuses on how consumer data is collected and stored, AI regulations (like the EU AI Act) focus on what the algorithm does with that data. You can be 100% GDPR compliant but still violate AI ethics laws by using transparently collected data to build manipulative, subliminal targeting models.

How do we know if an AI vendor’s bias mitigation is actually effective?Ask them for their model cards and historical Bias Impact Assessments. A trustworthy vendor will be able to show you exactly how they tested for bias across different demographics during their model’s training phase. If they claim their AI is “bias-free” without providing the testing documentation, that’s an immediate red flag.

Your Next Steps Toward Confident AI Adoption

The divide between high-performing marketing teams and struggling ones in 2026 isn’t based on who has the most AI tools—it’s based on who trusts their tools the most.

If you are currently evaluating AI solutions, stop treating ethics as an afterthought. Start by establishing your team’s baseline. Download a standard AI Marketing Governance Checklist, audit your current legacy platforms against the EU AI Act’s manipulation standards, and begin introducing bias detection layers into your QA process.

Innovation without responsibility is just liability waiting to happen. Equip your team with the right guardrails today, so you can confidently scale your AI marketing efforts tomorrow.