Picture this: You are launching a major Q2 campaign. You use a popular generative AI tool to draft the copy and create the lifestyle images. It saves your marketing team days of work. But when you hit publish, the backlash is immediate. The images rely heavily on outdated cultural stereotypes, and the tone sounds uncannily like a generic corporate bot.

You didn’t just save time; you unknowingly compromised your brand’s integrity.

As we navigate 2026, generative AI is no longer a novelty—it is a baseline capability for marketers, startup founders, and content creators. Yet, while the technology has matured, the ethical guardrails have often lagged behind. AI is incredibly powerful, but it lacks human empathy, cultural nuance, and an inherent moral compass.

Ethical AI in content creation isn’t just a compliance checklist or a buzzword. It is a proactive strategy to build trust, maintain legal safety, and ensure your brand sounds like your brand. In this guide, we are going to demystify AI ethics, unpack how algorithmic bias infiltrates marketing materials, and give you practical frameworks to keep your content authentic and responsible.

Understanding Ethical AI: The Core Foundations

At its core, ethical AI in content creation means deploying artificial intelligence in a way that respects human rights, promotes fairness, ensures transparency, and maintains accountability.

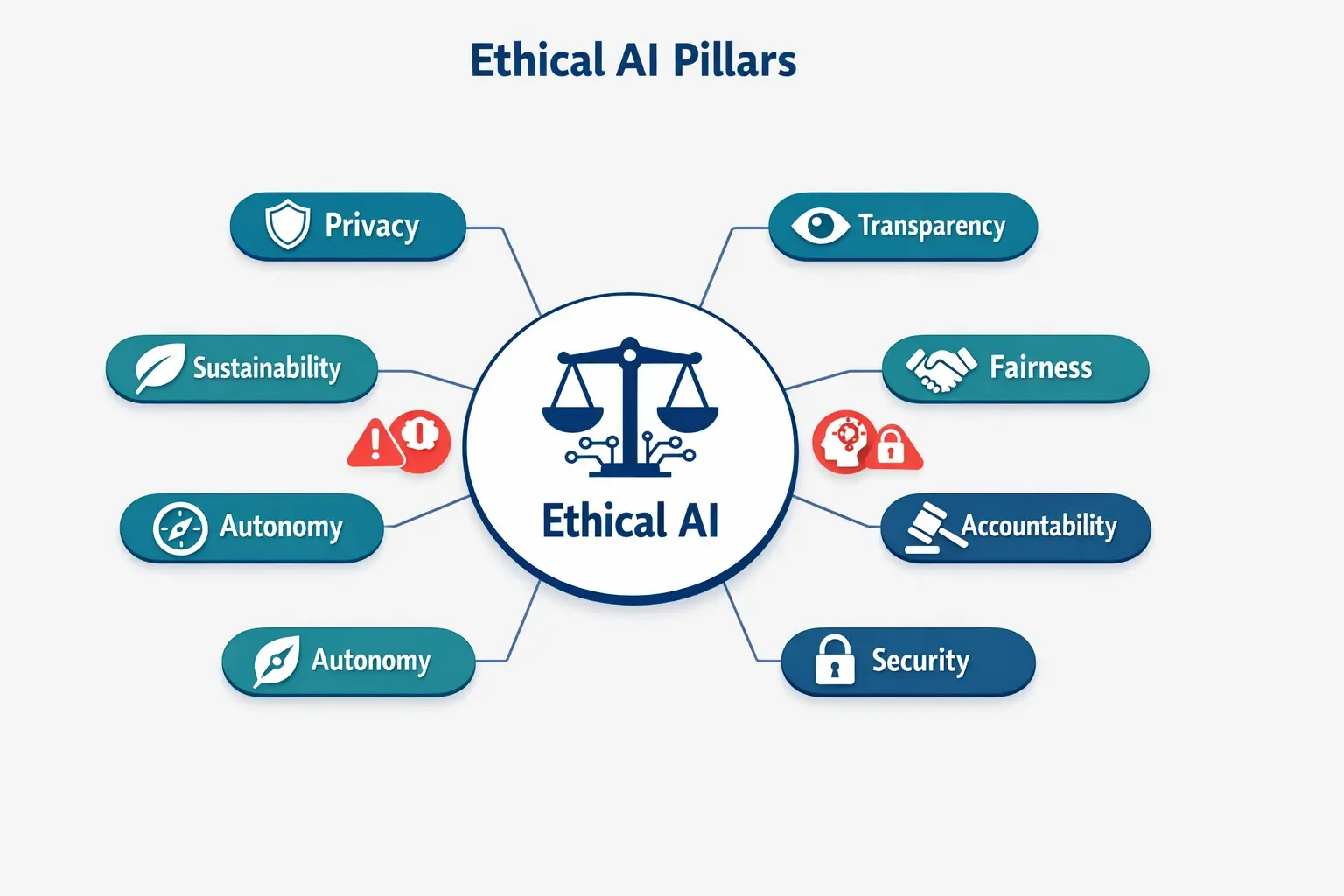

It helps to look at this through the lens of the 5 Pillars of Ethical AI:

- Fairness: Ensuring AI outputs do not discriminate or amplify harmful stereotypes.

- Transparency: Being open about when and how AI is used (often called the principle of disclosure).

- Accountability: Recognizing that while an AI might generate the content, a human is ultimately responsible for publishing it.

- Privacy: Safeguarding user data and ensuring proprietary brand information isn’t accidentally fed into public LLM training data.

- Sustainability: Acknowledging the environmental impact of training and running massive AI models, and optimizing your usage accordingly.

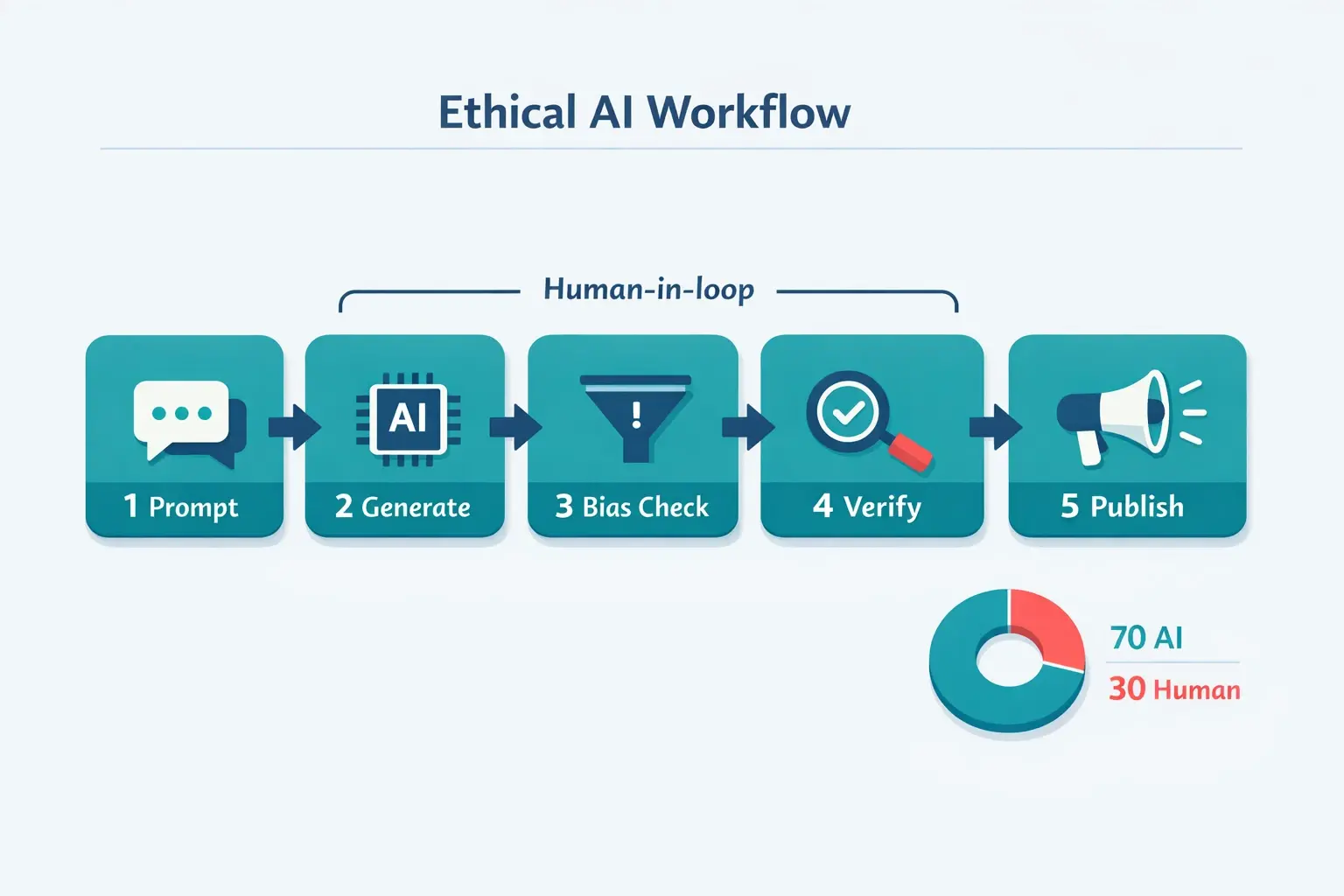

To uphold these pillars, the golden standard in 2026 is the “Human in the Loop” (HITL) imperative. A helpful benchmark is the “30% rule”: Let AI automate the 70% of the work that involves research, structuring, and drafting. Reserve the remaining 30% for humans to inject creativity, verify facts, and apply ethical judgment.

The Anatomy of AI Bias: Where It Hides

A common misconception is that artificial intelligence is inherently objective. In reality, AI models are trained on massive datasets scraped from the internet—a reflection of humanity with all its historical prejudices, structural inequalities, and biases intact.

Bias doesn’t magically appear; it is inherited. It usually manifests in content creation in a few ways:

- Representational Bias: Asking an image generator for “a successful CEO” and consistently receiving images of older white men.

- Linguistic Bias: Using language models that default to western-centric idioms or misunderstand cultural context, leading to tone-deaf localization.

- Confirmation Bias via Prompts: If you feed an AI a heavily skewed premise (“Write an article about why millennials are lazy workers”), the AI will happily hallucinate facts to validate your flawed prompt.

To stop bias before it reaches your audience, you need to intercept it at the prompt level.

Practical Mitigation: Your Ethical Content Workflow

If you want to ensure your content is inclusive and accurate, you need a workflow that treats AI as an assistant, not an autonomous creator. Here is how to build that framework.

1. Inclusive Prompt Engineering

How you ask the question dictates the fairness of the answer. Mastering prompt engineering for marketing requires moving away from generic commands to highly specific, constraint-driven instructions.

The “Before & After” Prompt Playground:

- Generic (High risk of bias): “Generate an image of a marketing team brainstorming.” (Result: Often defaults to homogenous groups in stereotypical startup settings.)

- Inclusive (Low risk of bias): “Generate a photorealistic image of a 5-person marketing team brainstorming in a modern office. Ensure the team is diverse in age, gender, and ethnic background. Include one person utilizing a wheelchair. The mood should be collaborative and professional.”

2. Diverse Data and Channel Strategy

Don’t rely entirely on AI for your worldview. Diversify where your content draws its inspiration. By incorporating insights from community forums, customer interviews, and even platforms often overlooked for direct audience sentiment like quora marketing, you inject real, diverse human perspectives that counterbalance algorithmic echo chambers.

3. The Rigorous Review Process

Never publish raw AI output. Establish a clear human-review checklist that asks:

- Does this content rely on stereotypes or generalizations?

- Are all statistics and “facts” generated by the AI backed up by primary sources? (Beware of AI hallucinations).

- Is the language accessible and inclusive?

Staying updated on the latest ai workflows hacks products features 2026 can help teams integrate bias detection tools (like Microsoft Fairlearn or AWS SageMaker Clarify) directly into their CMS, alerting editors to exclusionary language before it goes live.

Beyond Bias: Designing Brand Authenticity

Mitigating bias is about avoiding harm; ensuring brand authenticity is about driving value. AI models are trained to predict the most statistically probable next word. By definition, that means AI output is designed to be average.

If you want your brand to stand out, you have to break the algorithm’s instinct to be generic.

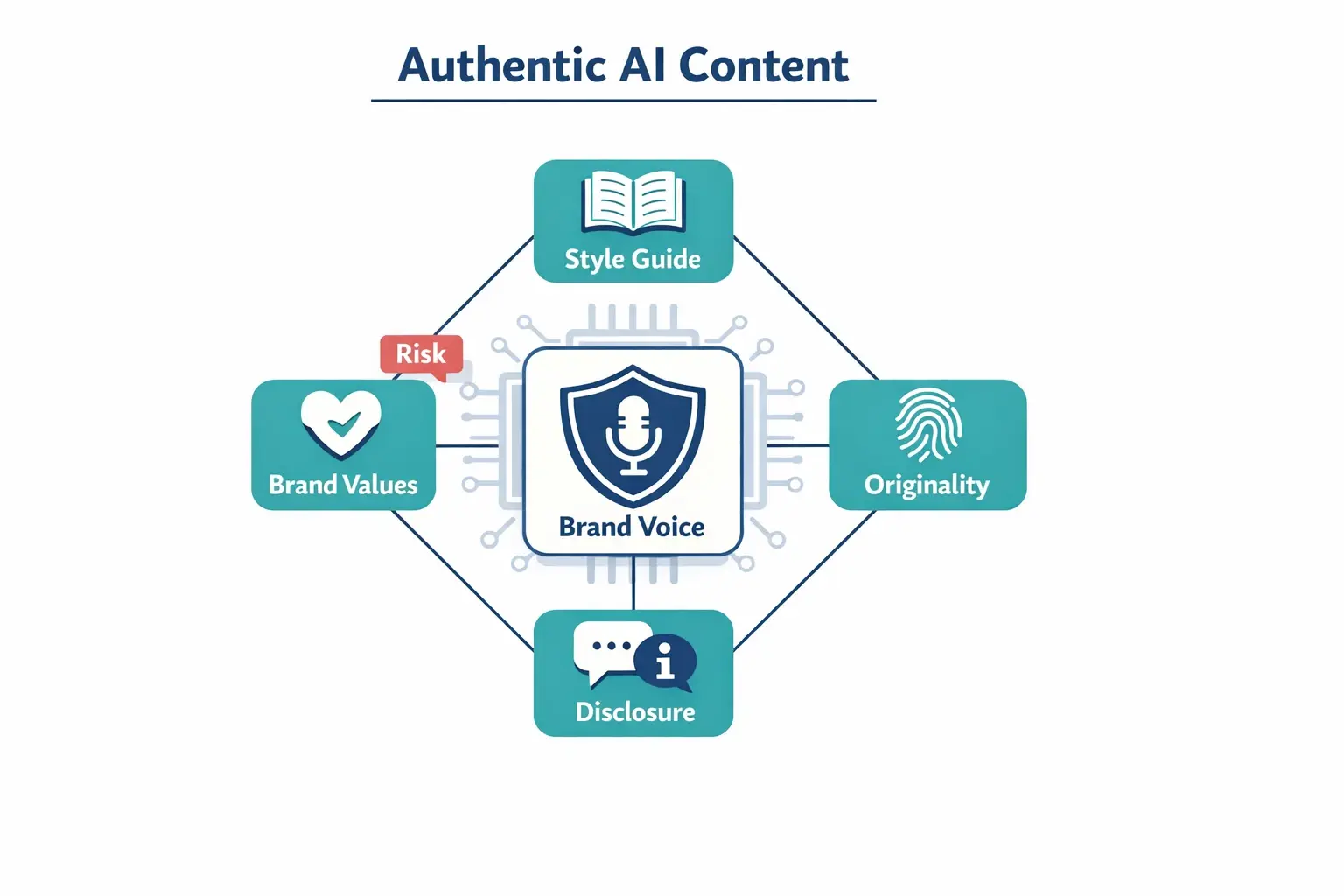

When looking at ecommerce brands that used growth hacking to scale rapidly, a common thread is a distinct, unmistakable brand voice. You can maintain this while using AI by building a “Brand Authenticity Framework”:

- Custom AI Personas: Feed your AI your specific brand guidelines, top-performing historical content, and tone descriptors. Tell it what you sound like, and more importantly, what you don’t sound like.

- The “Anecdote Injection”: AI cannot share a personal story. Always weave in real customer testimonials, proprietary data, or founder stories that an AI could never fabricate.

- Originality as a KPI: Track your content not just on SEO metrics, but on “brand voice alignment.” If it reads like anyone in your industry could have published it, it needs another human pass.

Advanced Considerations: Legal Guardrails and Disclosure

As we operate under modern regulations like the EU AI Act, the legal landscape surrounding AI content creation is stricter than ever.

Copyright and Intellectual Property

In 2026, the legal consensus remains clear: AI-generated content cannot be copyrighted if it lacks significant human authorship. If your business relies on proprietary assets, exclusively using AI to generate those assets leaves your intellectual property unprotected. Furthermore, be cautious about feeding sensitive company data into public AI models, as this can breach privacy protocols.

Transparency and Disclosure

When should you disclose that content is AI-generated? The rule of thumb is grounded in consumer trust. For internal brainstorming or structuring an outline, disclosure isn’t necessary. However, for factual reporting, photorealistic imagery used in advertisements, or content offering medical/financial advice, transparency is critical. Disclosing “Image generated by AI” prevents deception and builds a foundation of honesty with your audience.

FAQ: Navigating AI Ethics in Content

Q: Will Google penalize my website for using AI-generated content?A: Google’s 2026 guidelines focus on the quality of content, not the method of creation. Content that is purely generated by AI to manipulate search rankings (spam) will be penalized. However, high-quality, human-reviewed content that utilizes AI for drafting but provides genuine value, expertise, and authenticity will perform well.

Q: Does my small business really need an internal AI ethics policy?A: Yes. Even a one-page document outlining how your team uses AI, what data you are allowed to input, and who holds the final review responsibility can prevent catastrophic public relations issues and legal headaches down the line.

Q: How do I know if an AI image generator is stealing artists’ work?A: Stick to reputable enterprise tools that offer indemnification and are transparent about their training datasets (e.g., tools that only train on licensed or public domain content). Avoid tools that allow you to prompt “in the exact style of [Living Artist].”

Your Next Steps Toward Responsible Creation

Embracing ethical AI in content creation doesn’t slow you down—it actually speeds up your path to building genuine trust with your audience. By understanding the sources of bias, mastering inclusive prompts, and implementing a rigorous human review process, you can leverage the immense power of AI without sacrificing your brand’s soul.

Ready to build your ultimate marketing tech stack responsibly? Dive deeper into our curated swipe resources to find the best unbiased tools, frameworks, and guides designed to help you scale your marketing efforts with integrity and authenticity.